For a while, a lot of engineering discipline was justified around human limitations.

- We needed specs so people could align.

- We needed clean architecture so devs could understand, navigate, and safely change the system.

- We needed code reviews so other engineers could catch mistakes.

Now that AI can write code, review code, suggest tests, and help refactor, it is tempting to think some of that discipline matters less.

What I’m learning is the opposite.

AI is the way, but that does not make it safe by default. Adopting AI is not the same as improving engineering.

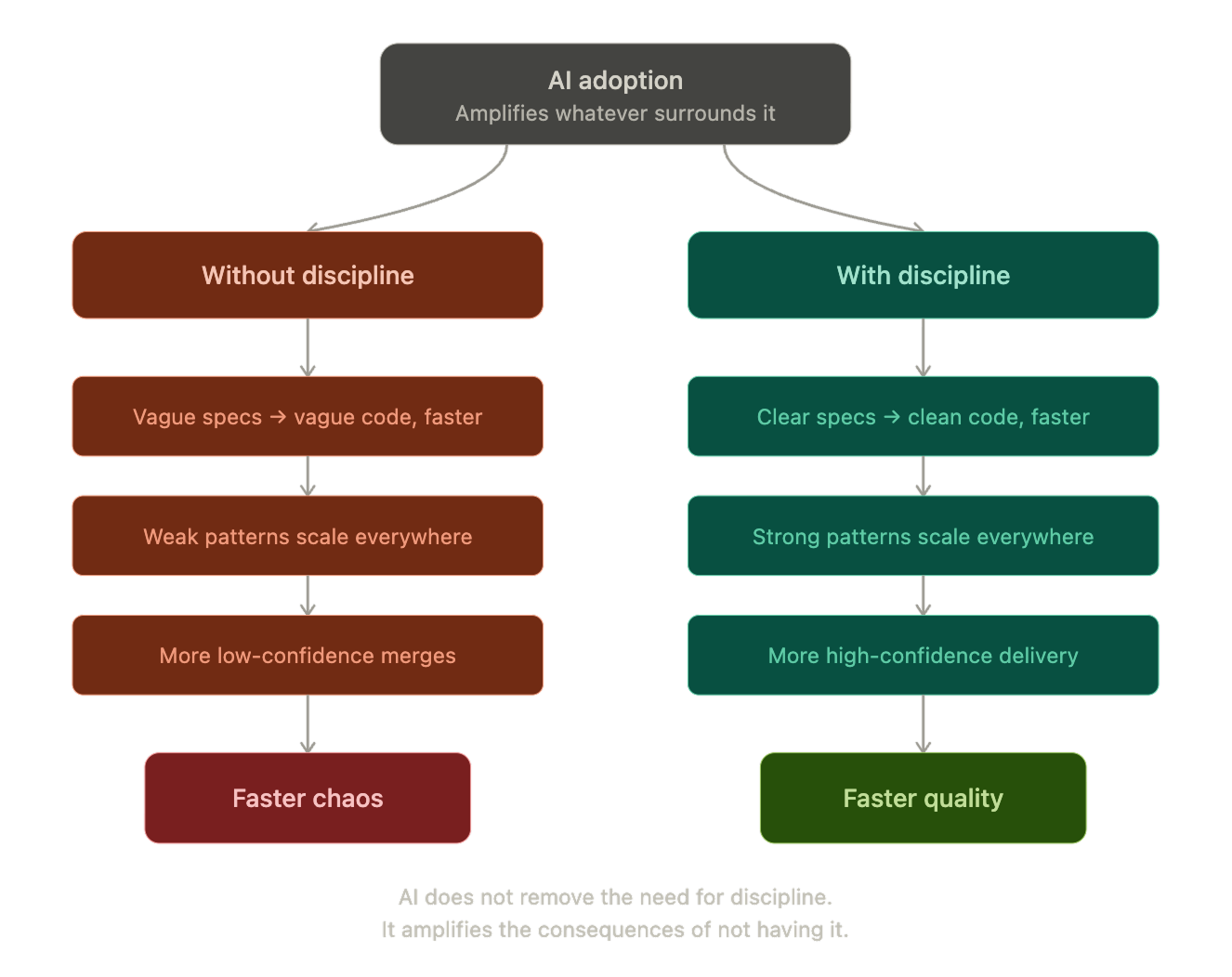

AI can absolutely increase delivery speed. It can also increase the speed at which a team creates inconsistency, shallow abstractions, scattered logic, and tech debt. It not only scales good practices, it also scales weak ones.

If requirements are vague, AI helps produce vague implementations faster. If the architecture is weak, AI helps expand that weakness across the codebase faster. If the review is shallow, AI helps merge more low-confidence code faster.

That is why AI without engineering discipline creates faster chaos.

The biggest issue is usually not the model, it is the system around it

A lot of bad AI output is really bad engineering context:

- unclear requirements & missing business rules

- weak module boundaries, inconsistent conventions

- outdated repo documentation

- no real review framework

In those conditions, AI fills the gaps. It guesses. It imitates nearby patterns, even when those patterns are bad. The result may look correct locally, while making the overall system worse.

Humans do that too. AI just gets there faster.

The real shift is upward, not away

The wrong mental model is to think the future belongs either to engineers writing everything manually or to people just prompting their way through the repo.

The better model is AI-first engineering. That means the engineer’s role shifts upward:

- shaping context

- defining constraints

- guiding the architecture

- validating outputs

The valuable engineer is not just the one who can code, but the one who can make AI produce good code consistently inside a healthy system.

That is a very different standard from vibe coding.

What becomes more important in AI-first engineering

This is the part I think teams need to take seriously.

If we want AI to be part of the normal engineering workflow, then some practices become even more important:

- strong file and module boundaries

- explicit conventions and specs

- architecture constraints AI must follow (e.g., feature folders, single responsibility per file)

- code review that checks if it fits the system, not just if it works

The discipline does not go away. It moves: Less energy spent typing every line manually. More energy spent defining, constraining, and reviewing.

Final takeaway, my lesson learned is simple:

AI is the way, but it is not a shortcut around engineering rigor.

If anything, it makes rigor more important.

The teams that win will not be the ones using AI the most. They will be the ones who build an engineering system where AI can move fast without making the product, the codebase, and the team harder to manage.

AI does not remove the need for engineering discipline. It amplifies the consequences of not having it.