This post from Anthropic explains, very clearly, how we should be using AI in software development today. The key idea is simple: prompt engineering is fine for single, isolated questions, but real systems require context engineering. Once you move beyond one-off queries and into agents, workflows, or multi-step reasoning, the quality of what you put into the context window matters more than how clever your prompt is.

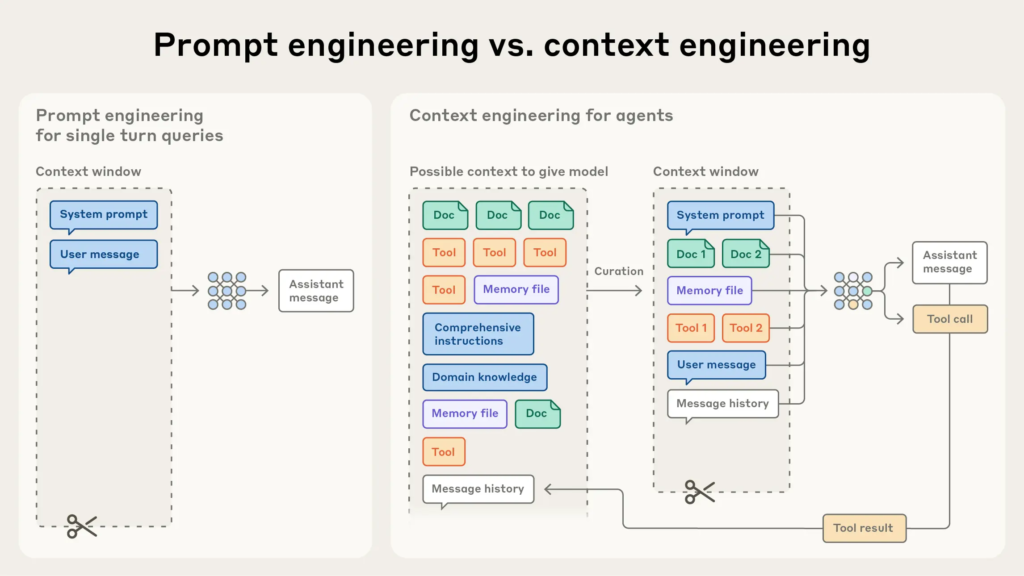

The diagram makes this obvious. On the left, prompt engineering relies almost entirely on a system prompt and a user message. On the right, context engineering treats the model like part of a system, curating documents, tools, memory, domain knowledge, and history before the model ever reasons. The model is not asked to guess, it is given what it needs to decide.

This is where the dumb bar problem shows up. Prompting without proper context, real data, or constraints lowers the dumb bar so much that hallucinations become inevitable. As tasks grow in complexity, performance degrades, not because the model is bad, but because we are asking it to operate with missing inputs. In software terms, this is like calling a function without passing the required parameters and blaming the function when it fails.

A system that gets only vague or incomplete data behaves like a developer debugging in the dark. It fills gaps with guesses. For generative models, that’s hallucination: confident but incorrect responses. Worse, as tasks grow in complexity (multi-step logic, tool use, planning), performance degrades quickly unless the context feeding the model is precise, relevant, and curated.

If your AI system feels unstable, overly creative, or hard to trust, the fix is rarely a better prompt. It is raising the dumb bar by engineering the context: selecting the right information, limiting noise, grounding the model in real sources, and treating context as a first-class engineering concern.